New GAINBOARD 2803S Inference Accelerator Server Provides 1,084 TOPS at 110W

Press Release Summary:

The GAINBOARD™ 2803S Inference Accelerator Server is embedded with dual Xeon processors and 64 individual Lightspeeur® 2803S chips housed in four PCIe cards. The server is based on Matrix Processing Engine and AI Processing in Memory architecture. The PCIe Accelerator card’s design allows it to process AI models in parallel and to operate in cascade mode. The PCIe cards contain 16 chips supported by four Xilinx FPGAs. The Lightspeeur® 2803S supports esNet, MobileNet, ShiftNet and VGG neural networks.

Original Press Release:

Gyrfalcon Introduces GAINBOARDâ„¢ 2803S Inference Accelerator Server for the Industry's Best AI Performance-Energy Ratio

Makes It Possible for Data Center Operators Providing Cloud AI Services to Upgrade and Deliver Fastest, Most Precise AI with Low Cost of Ownership

MILPITAS, Calif., Nov. 29, 2018 /PRNewswire/ -- Gyrfalcon Technology Inc. (GTI), the world's leading developer of low-cost, low-power, high-performance Artificial Intelligence (AI) processors, today announced its second-generation server solution, the GAINBOARD™ 2803S Inference Accelerator Server. The server solution can be installed as an upgrade to data centers to rapidly deploy the highest performing AI acceleration for large numbers of data models running in parallel. Providing 1,084 TOPS at 110W, the GAINBOARD™ 2803S provides the industry-best ratio of performance-to-power use and offers low cost of ownership for data centers.

Unlike GPUs that are designed for general purposes that run fast, but not light, GTI's server solution for existing data centers has been built from the ground up to process AI data in this type of environment. Contrary to the industry's leader, whose general-purpose GPU offers high-performance at the expense of gas-guzzling and unnecessarily high overheads, GTI has addressed the rapidly growing demand for AI acceleration within strict constraints on physical space, cost of cooling equipment and other operating costs head on.

"Many data center operators have complained about having to wait for product from other providers, and now they can quickly get the solution they need along with increased performance and significantly lower power use," said Frank Lin, president of GTI. "In the data center, the top business concerns are the ability to provide higher service than competitors while keeping costs down. Our GAINBOARD™ 2803S Inference Accelerator Server has a lower equipment cost than competing solutions, delivers a lower monthly energy bill and reduces cooling equipment requirements. It is an all-around win to achieve AI acceleration without the drawbacks that data center providers have experienced with other AI solutions."

Each GAINBOARD™ 2803S Inference Accelerator Server contains dual Xeon processors and 64 individual Lightspeeur® 2803S chips (introduced last month) housed in four PCIe cards. Each card contains 16 chips, supported by four Xilinx FPGAs. Together, they work to provide a high-performance, low-power state-of-the-art solution that is reliable and flexible for rapid implementation and heavy usage for AI acceleration.

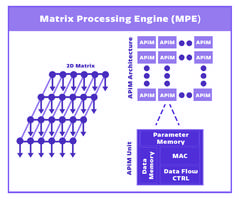

At the heart of the GAINBOARD™ 2803S Inference Accelerator Server is the proprietary and patented GTI technology, the Matrix Processing Engine (MPE) and AI Processing in Memory (APiM) architecture that accelerates AI using convolutional neural networks (CNN). This technology is used throughout GTI's portfolio of chips offered for edge and cloud AI and enables the data to be processed in-logic for the fastest processing combined with the best ratio of performance to power.

Technical specifications

The design of the PCIe Accelerator card allows it to process AI models in parallel and to operate in cascade mode. Cascade mode allows very large and complex models to be spread across the individual 2803S chips being used in the data center to process AI. The processing is cascaded from one chip to the next without requiring the intervention of a host processor, maintaining the high performance and low energy use while delivering the maximum level of flexibility. A single Lightspeeur® 2803S chip delivers 16.8 TOPS at just 0.7W with latency as low as 2 milliseconds for ultra-responsiveness. The Lightspeeur® 2803S supports ResNet, MobileNet, ShiftNet as well as VGG neural networks for inference and training.

Availability and GTI's product suite

GTI's mission is to provide an upgrade path and opportunities for customization across the AI spectrum, whether customers are designing edge AI devices, need a full server solution or want to source GTI's PCIe cards to make their own custom servers. With AI requiring domain-specific designs, GTI works with its customers to enable business-specific solutions for their unique market opportunities, providing the AI acceleration technology and building blocks.

The GAINBOARD™ 2803S Inference Accelerator Server will be available from early Q1 2019 and will join a suite of products already available from GTI: the server solutions using 2801S multichip board configuration for advanced edge and data center applications; and the Lightspeeur® 2801S and 2803S Neural Accelerators. Products are shipping commercially to electronics companies including Fujitsu, LG and Samsung and are all available now for qualified customers.

GTI will demo its portfolio of products including the GAINBOARD™ 2803S AI Server Accelerator at CES 2019 and is organizing demo visits in advance. For more information, visit https://gyrfalcontech.ai/solutions/

About Gyrfalcon Technology Inc.

Gyrfalcon Technology Inc. (GTI) is the world's leading developer of high performance AI Accelerators that use low power, packaged in low-cost and small sized chips. Founded by veteran Silicon Valley entrepreneurs and Artificial Intelligence scientists, GTI drives adoption of AI by bringing the power of cloud Artificial Intelligence to local devices, and improves Cloud AI performance with greater performance and efficiency, providing the utmost in AI customization for new equipment and a path to AI upgrade to customers. For more information on GTI, visit www.gyrfalcontech.ai.

Media Contact

Caroline Whitehouse

SHIFT Communications

+1.617.779.1815

CWhitehouse@shiftcomm.com